Recently I was tasked with trimming and combining some videos for work. We had filmed 16 hours of lectures and needed to make them ready to be published. Unfortunately the cameras we used split the video into 19 minute segments that then had to be recombined into one long video. I got to work.

It was recommended to me that I just use Windows Movie Maker. I was skeptical, but I decided to give it a try. I copied the first 3 videos into the Windows Movie Maker workspace and waited. After waiting several minutes for the files to import and seeing the progress bar barely move, I decided there had to be a better way. I had used FFMPEG in the past in the form of SUPER media encoder. However, this time I decided to go directly to the source and use FFMPEG from the command line. Since Windows 7 (64 bit) is my primary operating system, I would use that. Likewise, my videos are mp4 files, so these instructions are written for those.

Disclaimer: I am not an FFMPEG expert. This is just what I found that worked for me. No guarantees that it is the most efficient way or that it will even work for you.

FFMEG can be found at the link above. Select your operating system and put it in a convenient folder (I used a Windows static version). Unzip it, and it is ready to use.

2) Separate Files into Directories

The first thing you are going to want to do is separate each set of files that you want to join into directories (ie. folders). One thing to make your life easier, make the name short and leave out any spaces. For example, "JohnsBirthday" instead of "Johns Birthday May 22 1934"

3) Run Batch File and Navigate to Directory

Run the batch file in the folder where you unzipped FFMPEG. It should be called "ff-prompt.bat". This will launch FFMPEG in a command prompt in the directory where it is located.

Now you need to navigate in the command prompt to the directory (the folder) where you put the videos. If you know how to do this, use your favorite method. If you don't, here are some basic instructions. "cd" is the command to change directory. The usage is below.

- Use "cd .." to go up one level

- Use "cd folderName" to navigate to a folder in your current directory

If you get lost, you could always put the videos you are working on in the folder with the batch file. Then you would not have to navigate anywhere.

4) Combine Videos Using FFMPEG

To combine a video we are going to "concatenate" them. If you are familiar with programming, it is the same concept as concatenating a string. You are taking one and sticking on the end of the other.

HERE is the link to the ffmpeg wiki page with the documentation, but I will copy the steps below that I used.

1) Copy the command below into the command line you have open. Replace "mp4" with the file type of your videos (.wav, .mov, etc.). Then run the command. It should execute very quickly.

(for %i in (*.mp4) do @echo file '%i') > mylist.txt

This creates a list of the files to be concatenated. Alternatively, you can create the file by hand. One note on this, I am not sure how it determines the order. It seems to be by the name. Also, spaces in the name cause problems, so make sure no file names have spaces.

2) Now copy this into the command line you have open. Note that the ".mp4" was added by me to get it to work. The wiki implies you shouldn't need it.

ffmpeg -f concat -i mylist.txt -c copy output.mp4

This will take some time to execute, but it will still be much faster than re-encoding the video. I was combining about 1.5Gb of video and it took 3 minutes.

|

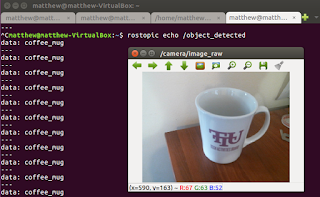

| Command Line at the End of Successful Execution |

3) Verify video. I went through and double checked at the points were it transitioned to make sure everything worked out. This step is obviously optional

5) Trim Videos Using FFMPEG

Trimming is a bit more complicated. "Why does my audio get desynced when trimming with FMPEG?" This is because of something called "key frames" that mp4 files use. I did not take the time to research those all that much, but the long and short seems to be that you have to trim at a key frame if you want to be able to trim a video without re-encoding or desyncing the audio. This is the command line instruction.

ffmpeg -ss 00:03:35 -i output.mp4 -t 00:57:04 -c copy outputtrimmed.mp4

As best I understand it, here is what is happening - the -ss "searches" for the closest key frame to the time 00:03:35. This will happen at the speed of re-encoding (slow). It will then pull in the file "output.mp4" starting at that key frame and will copy from that frame until time 00:57:04 into the file "outputtrimmed.mp4". This part will happen without re-encoding and will be fairly fast. This does not allow to trim at exact points, but it allows you to do it quickly and without losing audio sync (both important in my book).

6) Repeat

Do this for all of your videos.

Conclusion

So that is how I combined and trimmed a bunch of 19 minute segments into hour long blocks totaling about 16 hours quickly and without re-encoding the video. No guarantees it will work for you. I just had a hard time finding detailed instructions on this, so I thought I would post. One note, I imagine trimming before you combine would speed up the process a bit. For me it just made sense to do it after so I would not have to juggle so many files. The process isn't that long anyway.

That's it! If you have any questions or comments post those in the comments below. If you have any other methods to try or ways to make this better, post those too!

Until later,

-Matthew